Inside Samsung’s next-gen phone displays: color and health sensing

Why these display prototypes matter

Samsung has again pushed the display boundary with two very different but complementary innovations: one that expands how phones reproduce color, and another that turns the screen itself into a private health sensor. Both ideas point to a broader trend — displays are becoming multipurpose components, not just output layers.

Below I unpack what each prototype does in practical terms, give concrete scenarios for users and developers, and outline the product and regulatory questions companies should be thinking about.

A quick primer on Samsung Display

Samsung Display is the arm that builds OLED panels used across phones, tablets and wearables. Because it supplies many OEMs it often sets hardware expectations for the industry; experiments it demonstrates tend to find their way into shipping devices within a few product cycles if they solve a clear problem and can be produced at scale.

Ultrawide color: more than just brighter pixels

The first prototype focuses on color volume and gamut. Instead of simply increasing peak brightness or pixel count, the panel aims to represent a wider slice of the color spectrum — more saturated reds, greens and blues — while preserving detail in shadows and highlights.

Practical implications

- Content creation: Mobile photographers and videographers can capture and preview footage with richer on‑device color fidelity. That reduces the need for heavy post‑processing to reconstruct saturated hues that small displays usually clip or desaturate.

- Streaming and gaming: App developers and service providers can deliver HDR or expanded‑gamut assets that look closer to their intent on phones, making mobile viewing a more faithful experience.

- Branding and marketing: Advertising creatives benefit from displays that reproduce brand colors accurately, improving ad quality and reducing disputes over color mismatch.

Concrete example Imagine a smartphone filmmaker shooting a sunset for social short‑form video. On a conventional phone the deepest oranges might wash out or drift toward red. With an ultrawide color display, the director can preview the exact orange and adjust exposure, filters, or grading on the device, saving time and storage during editing.

Tradeoffs and constraints Wider gamut panels can be more power hungry when driving saturated pixels. They also demand better color management in software — otherwise content will be incorrectly translated between devices. For developers that means shipping color‑aware assets or relying on system color profiles and proper rendering pipelines.

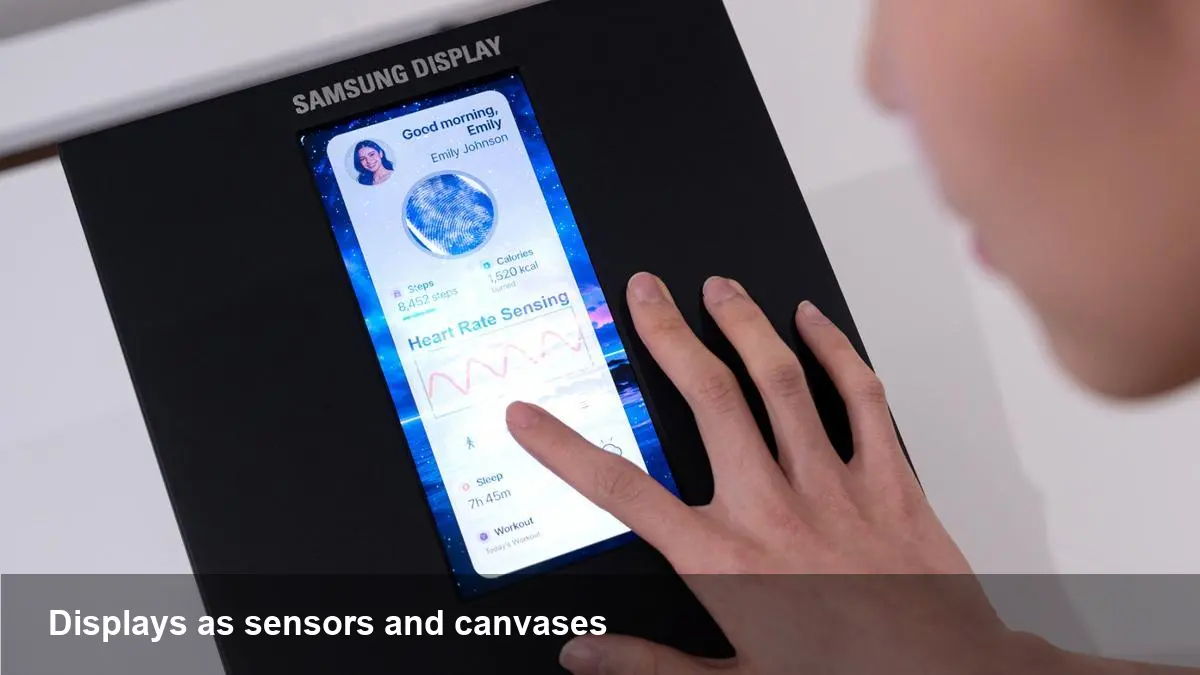

Private health sensing: turning a screen into a biometric gateway

The second prototype integrates sensors beneath the display so the screen can collect physiological signals while keeping the user interface intact. The selling point Samsung showcased is privacy: sensing that happens through the screen rather than broadcasting signals or requiring wearable hardware.

How it likely works (and why that matters) Under‑display sensing can use optical techniques similar to finger photoplethysmography (PPG), capacitive sensing, or tiny radar‑like modules embedded near the panel. The advantage of doing this under glass is twofold: it preserves a clean industrial design and it makes sensor access optional — users can opt in by placing a finger or wrist on a specific area of the screen.

Use cases that make sense today

- Quick biometric checks: resting heart rate and blood oxygen estimates after a workout without wearing a band.

- Passive wellness monitoring: short breath or stress assessments during a meditation session where the user rests a finger on the screen.

- Accessibility and triage: at‑home checks for elderly users that pair with telemedicine consultations.

Important caveats These display‑based measurements will likely be useful for wellness and fitness, but not a substitute for clinical devices. Accuracy can be affected by skin tone, motion, ambient light and positioning. Any manufacturer shipping such features will also face medical device regulations and privacy laws — important factors for startups and app teams building on the platform.

What this means for developers and product teams

- New SDK opportunities — and responsibilities: Expect OEMs to offer SDKs that expose color profiles and sensor APIs. Developers who use them should plan for on‑device processing and strong privacy defaults to avoid leaking sensitive biometrics.

- Content pipeline changes: Creators and streaming apps should adopt color management workflows. That means embedding profiles, testing across gamut ranges, and offering fallbacks for legacy displays.

- Health‑data governance: Apps leveraging screen sensors must design with consent, minimal data retention and clear user-facing explanations. Third‑party health integrations will need formal verification and careful terms of use.

Concrete developer scenario A fitness app could add a "tap to check" feature that uses the screen sensor for a quick heart‑rate reading. Behind the scenes the app should run algorithms locally, only upload anonymized summaries (with explicit consent), and provide calibration prompts to improve accuracy.

Business and industry implications

- Hardware differentiation: Panels that combine color fidelity and sensing become a visible point of differentiation for OEMs competing on camera/display experience and wearable replacement.

- Content monetization: Better color reproduction can prompt streaming platforms to deliver higher‑quality mobile streams (and potentially new pricing tiers for true HDR/gamut playback).

- Regulatory and partner ecosystems: Phone makers will need partnerships with clinical researchers and possibly regulatory clearances for any health claims — creating opportunities for startups that offer validated algorithms and compliance tooling.

Three forward-looking takeaways

- Displays will act as both output and input: Expect more phones where the screen doubles as a sensor hub (beyond touch and fingerprint) — opening novel UX patterns like touch‑triggered biometrics.

- Color fidelity becomes a workflow concern: As mobile displays get closer to professional color volumes, production and publishing pipelines will need to handle color profiles natively, bringing mobile closer to pro video workflows.

- Privacy and regulation will shape adoption speed: Health sensing is compelling, but real consumer trust and enterprise adoption will hinge on accuracy, clinical validation and robust privacy controls.

If you build apps, hardware or content for mobile, start experimenting now: add color‑managed previews to creative tools and design health features as opt‑in, local‑first experiences. These prototypes are early, but they highlight where next‑generation phones will invest: richer visuals and more intimate, private sensing — all inside the display you stare at every day.