How metasurface lenses let displays switch between glasses-free 3D and 2D

Why a switchable glasses-free display matters

For years the promise of glasses-free 3D (autostereoscopic) displays has circled consumer electronics, but compromises in brightness, viewing angle and content support limited adoption. A new generation of optical components — nanostructured metasurface lenses — changes the trade-offs. These lenses can steer light at subwavelength scales, enabling a single display to present a true 3D image without glasses, then revert to a standard 2D view when required. That versatility matters for phones, kiosks, automotive HMI, medical imaging and mixed-reality prototypes.

What a metasurface lens is (brief primer)

Metasurfaces are carefully patterned layers where each tiny element (often smaller than the wavelength of light) modifies phase, amplitude or polarization. Unlike traditional refractive or diffractive optics, metasurfaces give designers precise control over how light exits the surface, allowing functions such as beam steering, focus shaping or polarization conversion to be implemented in extremely thin layers.

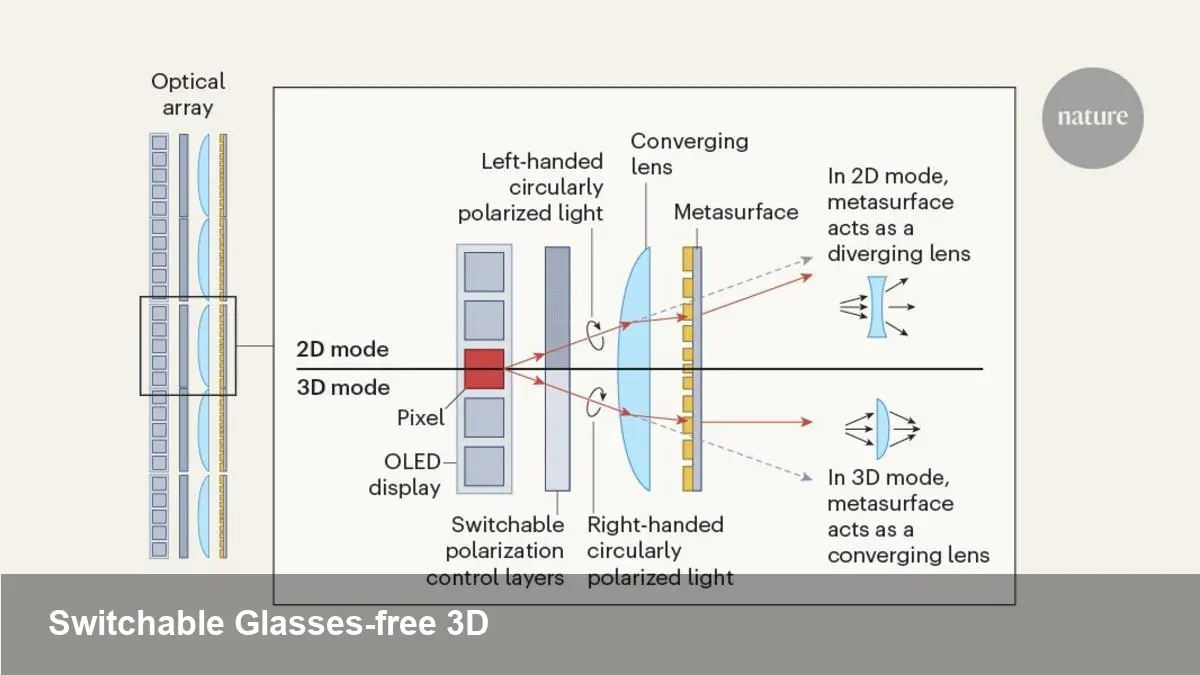

In the context of displays, a metasurface layer mounted in front of an LCD/OLED can redirect pixels into multiple angular views. The human visual system receives slightly different images in each eye, producing depth perception without eyewear. Crucially, an engineered metasurface can be designed to change behavior — for example, toggling between redistributing light across viewing angles (3D) and passing it straight through (2D).

How a display switches between 2D and 3D

There are multiple ways to make the switch practical. The most common approaches combine a programmable optical layer with either electronic control (e.g., voltage-driven active materials) or mechanical staging:

- Active metasurfaces: Materials whose optical response shifts when an electrical signal is applied (liquid crystals, phase-change films, electro-optic polymers) are integrated with a patterned metasurface. Driving the layer changes the effective phase profile, transforming a beam-steering pattern (autostereoscopic) into a uniform pass-through (2D).

- Passive metasurfaces + polarizer control: Some metasurfaces respond differently to polarization. A controllable polarizer or switched waveplate can change the polarization state of the display light, which makes the metasurface alternate between 3D and 2D modes.

- Hybrid mechanical/electrical systems: For prototypes and high-end signage, the metasurface element can physically move or tilt to change optical behavior, though this adds bulk and reliability considerations.

From a system perspective, switching is nearly instantaneous (milliseconds to fractions of a second) with active control, which lets the user or application pick the best mode dynamically.

Real-world user scenarios

- Smartphones: View a 3D model or depth-enabled photo without glasses for a friend, then switch back to 2D for normal browsing to preserve brightness and battery life.

- In-car displays: A heads-up cluster could show 3D navigation cues to the driver while remaining a standard 2D instrument panel for other passengers or during critical driving conditions.

- Medical workstations: Radiologists could inspect volumetric CT or MRI slices in glasses-free 3D to better perceive spatial relationships, then flip to 2D for annotations and report writing.

- Retail and signage: Storefront displays can show floating product demos in 3D that attract attention, and then revert to 2D advertising content that is legible from wider angles.

Developer and content workflows

Deploying switchable 3D requires more than the hardware: the content pipeline and runtime must know when to deliver multiple viewports and how to map those views to the display’s angular output.

- Content creation: 3D assets should be authored with multi-view rendering in mind. Instead of a single camera, renderers produce discrete view slices (lightfield slices or layered parallax images) that the metasurface steers into the viewer’s eyes.

- Runtime & engines: Game engines and visualization tools can integrate an SDK to output multiple synchronized camera streams. A simple API call can toggle the output from standard frame mode (2D) to N-view mode (3D) and adapt resolution and bitrate accordingly.

- Eye-tracking integration: To expand the usable eye-box and reduce crosstalk, eye-tracking can dynamically shift rendered views to match where the viewer is looking, improving brightness and effective resolution.

- Performance considerations: Generating multiple simultaneous views multiplies GPU cost. Developers should use foveated rendering, LODs, and precomputed parallax for static content to keep compute and thermal budgets manageable.

Business and manufacturing considerations

Metasurfaces are promising but not simple to scale. Fabrication typically uses high-resolution lithography, and integrating active materials adds process complexity. That means early adopters will likely be premium devices and specialized verticals (medical, automotive, digital signage) where differentiation and margin justify higher cost.

On the IP side, metasurface patents and design libraries will be valuable. Companies that combine optical design expertise with display-stack engineering (driver electronics, optical adhesives, coatings) will have an advantage when moving from lab demos to commercial products.

Limitations today

- Brightness penalty: Steering light into discrete angles reduces on-axis luminance unless compensated by higher panel brightness.

- Angular resolution and sweet spots: Autostereoscopic displays have limited eye-box regions. Without eye-tracking, multiple viewers or moving viewers will see artifacts or reduced 3D effect.

- Manufacturing tolerance: Nanopattern precision matters. Yield and coating durability in consumer environments (humidity, temperature) are engineering challenges.

Where this goes next — three practical implications

1) Mobile-first 3D for social and e-commerce: Expect niche phone models that ship with switchable metasurface layers to target AR/social buyers who want immersive content without glasses.

2) Convergence with eye-tracking and AI: Real-time gaze-aware rendering, powered by lightweight models, will reduce compute cost and make autostereoscopic displays practical for more everyday uses.

3) New content formats and standards: As hardware proliferates, we’ll see standardized multi-view formats and SDKs for engines (Unity/Unreal plugins, Web APIs) to simplify developer adoption and cross-device compatibility.

Practical recommendation for product teams

If you’re building a product around switchable glass-free 3D, start with a targeted use case where 3D adds measurable value (diagnostics, retail showrooms, navigation). Prototype with off-the-shelf panel + metasurface modules, verify eye-box and brightness in real environments, and design the software pipeline early: multi-view asset generation, switching API, and optional eye-tracking.

A metasurface-enabled display that can toggle between glasses-free 3D and standard 2D bridges a long-standing gap in display design — it pairs the wow factor of volumetric imagery with the everyday practicality of conventional screens. The next few years will tell whether the manufacturing and system-level hurdles are surmountable at scale, but for now the technology points to concrete product opportunities where the 3D mode is a feature, not a compromise.