When Almost Half the Uploads Are AI-Generated Music

A quick snapshot

Deezer, the Paris-based streaming service, recently revealed that roughly 44% of new tracks uploaded to its platform each day are created with AI tools. Yet listening behavior tells a different story: AI-generated music accounts for only about 1–3% of total streams, and Deezer says it flags and demonetizes roughly 85% of those detected AI streams as fraudulent.

That combination — a flood of AI-originated uploads but almost no listener traction and a high fraud-detection rate — raises practical questions for artists, platforms, and anyone building services around music discovery or monetization.

Why the numbers matter

Two dynamics are colliding here. First, music-production tools powered by generative models have become cheap and fast: anyone can produce a track with convincing instrumentation or synthetic vocals in minutes. That drives upload volume. Second, platforms must protect listeners, artists, and rights holders from manipulation: spam uploads, wash streaming, or synthetic copies of existing artists.

When a platform reports that nearly half of daily uploads are AI-generated, it shows how production is democratized. But the low share of streams suggests listeners still prefer human songwriting, familiar artists, or professionally curated releases. The high demonetization rate signals that platforms are actively policing gaming behavior — and that many AI uploads are associated with abusive patterns rather than genuine creative activity.

How detection works (and where it struggles)

Platforms use a combination of techniques to identify suspect content and streams:

- Audio fingerprinting and similarity detection to find near-duplicates or suspicious copies of existing songs.

- Metadata analysis to detect mass uploads with similar titles, artists, or distribution patterns.

- Behavioral analytics to spot streaming anomalies (sudden spikes, short listens, repeated plays from narrow IP ranges) that indicate bot farms or wash streaming.

- ML-based classifiers trained to distinguish synthetic timbres or artifacts common to AI-audio pipelines.

But detection isn't perfect. Some challenges:

- Generative models improve quickly; textures and prosody that once signaled synthetic audio are becoming harder to detect reliably.

- Overzealous filters can flag legitimate experimental releases or remix culture, creating false positives and frustrating creators.

- Adversarial producers can tweak models or post-processing to evade automated classifiers.

For developers building music services or rights-management tools, this means detection is an ongoing engineering problem rather than a one-off checkbox.

Four realistic scenarios to consider

- An indie artist releasing experimental tracks A DIY musician uses AI to generate backing tracks and hits upload for exposure. Their music receives modest plays and aligns with genuine fan engagement. This kind of release risks being misclassified if it shares sonic traits with flagged uploads, so the artist benefits from proactive verification (label registry, publisher metadata, or distribution through trusted partners).

- A spam network trying to game payouts Operators create thousands of AI songs, push them into playlists, and run scripted plays from rented devices. Platforms that detect these patterns will demonetize the streams; rights holders and DSPs lose trust. Preventing this requires cross-platform intelligence sharing and more robust listener authentication.

- A label experimenting with synthetic features A small label uses AI to prototype vocals or arrangements before commissioning a human singer. These demo tracks are uploaded privately or to closed groups for review. In a legitimate workflow, AI is a tool, not the product — but blurring the lines publicly without proper attribution risks demonetization and reputational harm.

- A curator or recommender system coping with volume Playlists and discovery algorithms have to sift through vastly more tracks. Curators will need better filters (submission vetting, verified artist badges, or ‘AI-made’ genre tags) to maintain playlist quality and listener trust.

Practical steps for platforms, creators, and startups

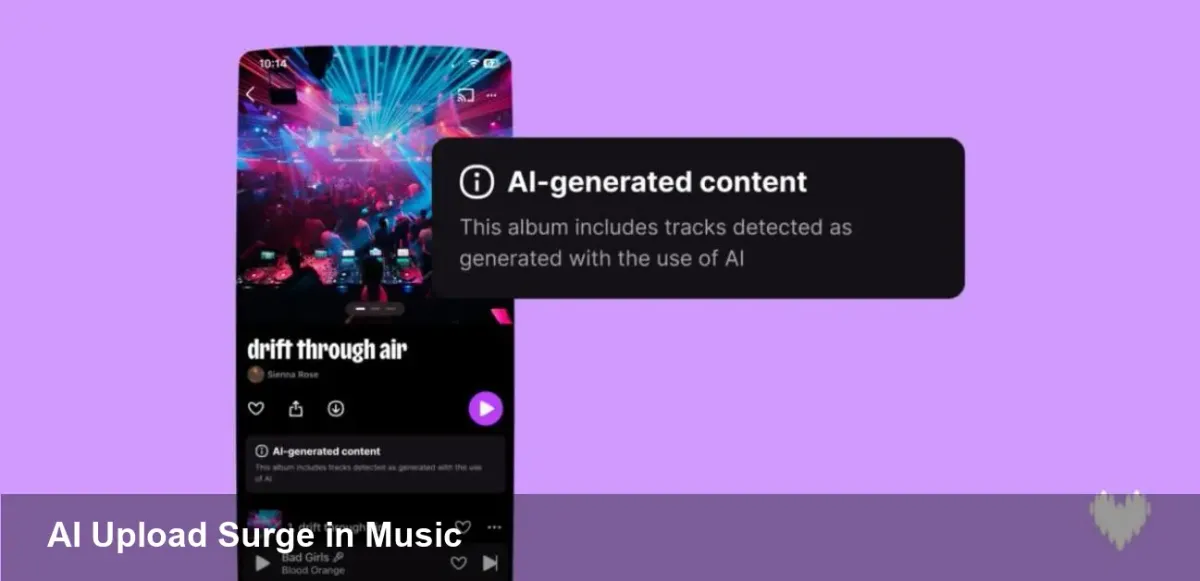

- Platforms should implement transparent labelling: let creators flag when a track contains synthetic elements, and show a small disclosure on streams.

- Improve creator verification: verified profiles, contract-backed distributor accounts, or publisher registrations reduce fraud vectors.

- Invest in cross-industry signals: fingerprint databases, shared abuse lists, and federated machine-learning models to catch coordinated manipulation.

- For creators, use audio watermarking and register works with rights organizations before public release to avoid mistaken demonetization.

- Startups building tools for detection should offer explainability — show why a track was flagged (duplicate audio, suspicious streams, etc.) so humans can override bad decisions.

Business and product implications

- Monetization integrity is at stake. If platforms can’t trust streams, payouts become contentious, and major labels may push for stricter ingress controls or premium verification tiers.

- Discovery experiences could suffer unless services adapt. Flooding users’ home feeds with low-quality synthetic tracks would damage retention, so editorial triage will become more important.

- New product categories will emerge: certified synthetic marketplaces, provenance-as-a-service APIs, and tools that embed robust, tamper-evident metadata into AI-generated works.

Three things to watch next

- The moderation arms race will accelerate As generative models evolve, platform detection and evasion techniques will chase each other. Expect tighter verification processes and more frequent updates to classifier models.

- Commercial labeling and marketplaces for synthetic music Legitimate use-cases — licensed synthetic vocals, custom production packs — will motivate marketplaces that certify provenance and clear rights, creating revenue channels for both AI toolmakers and rights-holders.

- Policy and rights frameworks will adapt Regulators and rights bodies are likely to propose clearer rules on attribution, royalties for synthetic copies, and liability for platforms that enable fraud. That could reshape distribution economics and contract terms.

AI-generated music is no longer a novelty; it's part of the upload stream. But quantity hasn't translated into listener demand yet, and platforms are responding by policing suspicious activity. For creators and product teams, the takeaway is clear: embrace generative tools thoughtfully, plan for provenance and verification, and expect the rules of engagement to keep changing as both models and defenses mature.