iOS 17.4 Adds Eight Emojis and AI Playlists — What It Means

Small set, big signal: the update at a glance

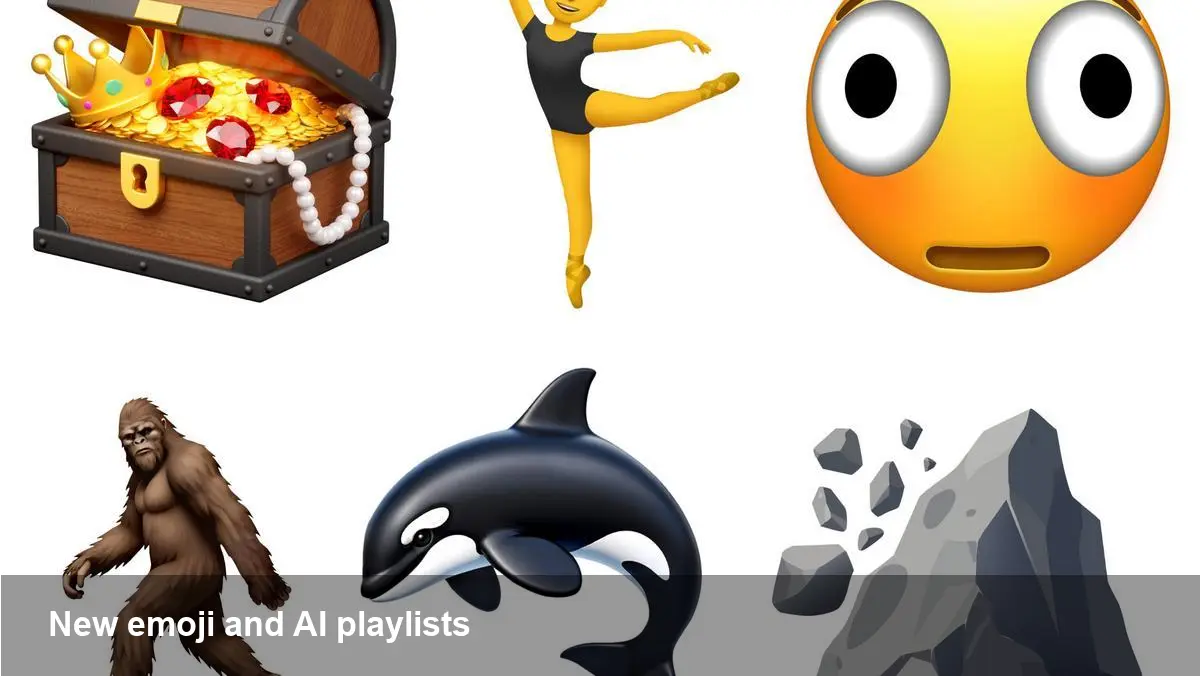

Apple’s recent iOS 17.4 update for iPhone and iPad delivers a compact but meaningful package: eight new emoji characters and expanded Apple Music features that include AI-generated playlists. These aren’t headline-grabbing platform shifts, but together they reveal Apple’s dual approach to user experience: incremental expressive tools plus AI-driven personalization.

Below I unpack what’s actually changing for end users, what developers and music businesses should watch, and where this could lead next.

What’s included in the update

- Eight new emoji glyphs added to the system palette (available once devices upgrade to iOS 17.4 / iPadOS 17.4).

- New Apple Music functionality that leverages generative AI to create personalized playlists — a step beyond static algorithmic mixes into on-demand, query-driven curation.

Both of these arrive as part of Apple’s continuing cadence of platform refinement: small, broadly distributed features that touch millions of active users immediately.

Why eight emojis still matter

Emoji additions often look cosmetic, but they influence communication and product behavior in several ways:

- User expression: New emoji options let people nuance tone and intent in chat, social posts, and comments without leaving the system keyboard.

- UX consistency: When platforms add glyphs straight into the OS, third-party apps (messaging, social networks, note apps) inherit support immediately, improving user experience across the ecosystem.

- Cross-platform friction: New emoji appear first on Apple devices and may render as blank boxes or differently on Android or web clients until vendors update glyphs. That’s important for designers and support teams who manage international or cross-device conversations.

Example: A community app that relies on emoji reactions should test how the new characters render on older devices or non-Apple clients and consider fallback text for critical flows.

Apple Music’s AI playlists — a practical look

This update extends Apple Music’s personalization toolkit by allowing users to generate playlists using generative AI. Practically, users can ask for a playlist tailored to mood, activity, or a specific sonic request (e.g., “acoustic sunset road-trip mix” or “energetic 45-minute workout set”).

Use cases:

- Workout and focus: A runner can request a BPM-specific playlist without curating tracks manually.

- Event DJing: Someone hosting a dinner or party can quickly create a set with a desired vibe or energy curve.

- Discovery: Casual listeners can surface tracks they wouldn’t find through purely popularity-driven charts.

From a UX perspective, AI-generated playlists convert a multi-step curation process into a single interaction. That reduces friction for casual users and raises expectations for instant, high-quality results.

What developers and music services should consider

If you build apps that integrate with Apple Music or provide content discovery, this change has practical implications:

- Metadata and tagging matter more: AI curation depends on accurate genre tags, mood metadata, and tempo/BPM fields. Labels and independent artists should ensure their catalog metadata is detailed and consistent.

- Integration surfaces: Developers using MusicKit should explore whether Apple exposes programmatic hooks for AI-generated lists or whether these remain user-side features. If Apple offers APIs, apps could surface AI playlists as part of discovery flows.

- Testing across devices: Make sure your app handles both the visual side (new emoji glyphs) and the playlist behaviors gracefully. If a playlist is generated but contains region-locked tracks, provide fallbacks.

Privacy, rights, and quality trade-offs

AI-driven music curation raises several practical questions:

- On-device vs cloud: If playlist generation happens in the cloud, that may have different privacy implications than on-device inference. Users and developers must understand what data is used (listening history, liked songs, timestamps) and whether prompts are stored.

- Licensing and regional availability: AI can assemble pleasing playlists, but if tracks aren’t available in a listener’s region, the experience degrades. Clear messaging and graceful substitutions matter.

- Explainability and control: Users may want transparency about why the system chose certain tracks and the ability to tweak parameters (e.g., more discovery, less popular).

Apple’s reputation for emphasizing privacy suggests some safeguards will be in place, but teams building on top of Apple Music should plan for edge cases and user questions.

Limitations and friction points

- Cross-platform emoji rendering will still lag: Android and web platforms update at different paces, so expect mismatches for months after the release.

- AI quality can be inconsistent: Generative playlists are only as good as the underlying models and metadata. Expect hits and misses early on.

- API access uncertainty: If Apple doesn’t provide developer-facing endpoints for AI playlist generation, third-party apps may be limited to surfacing user-initiated content.

Business and competitive implications

For Apple, the combined move strengthens two areas:

- Platform stickiness: New emoji reinforce the native keyboard and messaging experience, nudging users toward Apple’s ecosystem for social and communication features.

- Music differentiation: AI-generated playlists are a clear play to differentiate Apple Music from other services that already use algorithmic mixes (Spotify, YouTube Music), by making curation more conversational and creative.

For artists and labels, AI curation creates both opportunity and risk: exposure through personalized playlists can drive streams, but the selection logic may favor certain metadata or catalog properties over others.

Looking ahead: three implications to watch

- Evolving expectations for curation: Users will increasingly expect on‑demand, high-quality playlists generated from natural language prompts across streaming services.

- Metadata as strategy: Accurate tagging and richer track descriptors will become a competitive advantage for artists and labels seeking discoverability in AI-driven systems.

- Cross-device standardization pressure: As platforms iterate faster on expressive features, pressure will mount on Unicode, vendors, and web platforms to accelerate glyph rollouts to avoid fragmentation.

If you manage a music app, a label, or a community platform, prioritize metadata hygiene, test cross-device emoji behavior, and prepare UX flows that explain AI choices to users. For most users, this update will feel like a small burst of convenience — a few new emoji to express yourself and smarter playlists that can save time and surface new tracks — but it also signals where Apple is investing: expressive input and AI-assisted personalization.

Would you like a short checklist to prepare an app or catalog for these changes?