When DLSS 5 'glow-ups' cross the line: what gamers and developers should know

Why a player revolt over a graphics update?

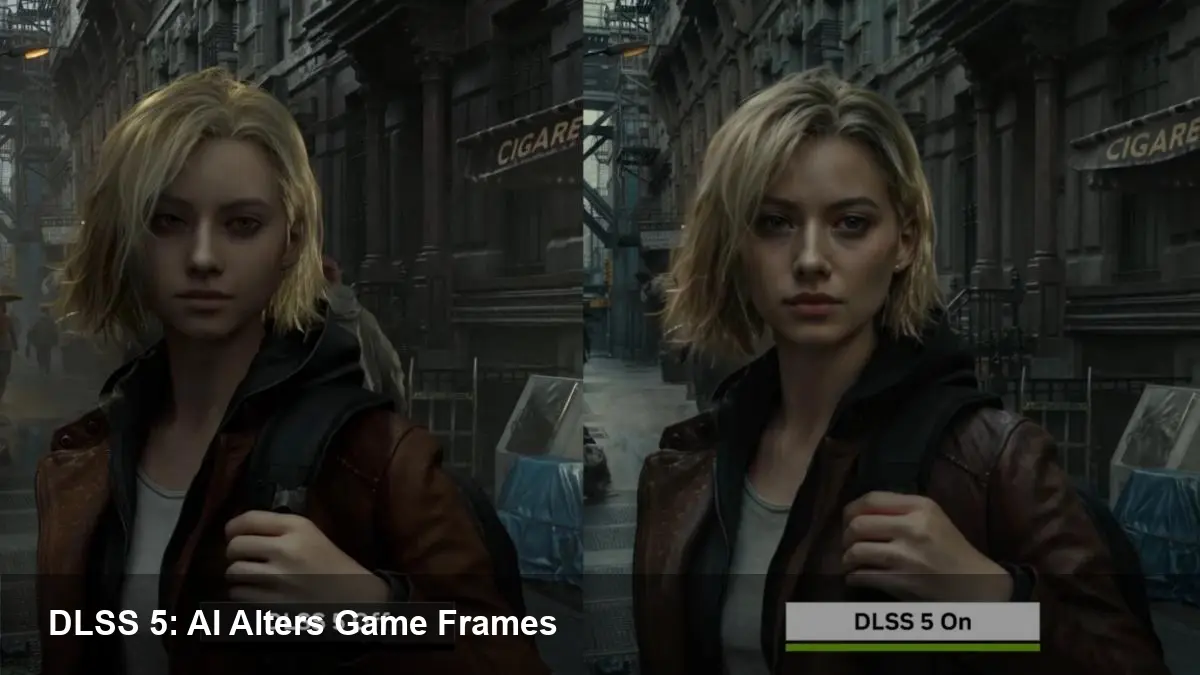

Nvidia's latest step in real-time image enhancement — commonly being called DLSS 5 — expands frame-generation and image refinement with generative AI techniques. Early demos and community tests show it can conjure additional detail into frames rather than just upscaling pixels. For some players that looks like a faster, cheaper way to higher fidelity. For many others it looks like the game is being rewritten by an algorithm without consent.

The backlash stems less from a technical shortcoming than from a collision of values: players expect a developer's artistic choices to remain intact. When an AI selectively sharpens faces, invents reflections, or remaps texture details, it can change mood, narrative cues, and even gameplay-critical visual signals.

A quick primer: where DLSS started and where it’s going

DLSS began as an upscaling and reconstruction system that used neural networks to extract higher-resolution-looking frames from fewer rendered pixels. Over iterations it added temporal accumulation, optical-flow-assisted frame interpolation, and low-latency features. The new generation moves from reconstruction toward generative frame enhancements — essentially using a trained model to ‘‘fill in’’ and sometimes invent content while producing intermediate frames.

That technical leap explains both the excitement and the alarm. Generative models can produce impressive detail and smoother motion at a fraction of the rendering cost, but they can also hallucinate elements that weren’t in the artist’s original frame.

What players are seeing in real examples

- Faces become slightly different: smoothing, altered eyebrow shapes, or softened expressions that change emotional nuance.

- Textures gain extra micro-detail or bloom where none existed, which can make surfaces read as newer or cleaner than designed.

- Environmental lighting and reflections appear exaggerated or misplaced, sometimes breaking continuity between frames.

- UI elements or HUD overlays can flicker or blur in ways that hurt readability.

These are not always catastrophic. In many scenes DLSS-like enhancement still improves perceived fidelity. But when it edits characters or intentionally stylizes a scene, players rightly ask whether they are looking at the game the developer shipped.

Practical implications for developers

If you ship a game with DLSS 5 support, expect to take on new responsibilities:

- Offer clear toggles and fidelity modes. Players want a binary choice: the artist’s original or AI-enhanced frames. Provide a middle-ground ‘‘faithful’’ mode that prioritizes minimal alteration.

- Integrate perceptual QA into the pipeline. Automated pixel-diff tests won’t catch subjective changes. Add human review checkpoints for cutscenes, cinematics, faces, and key gameplay moments where visual cues matter.

- Expose provenance metadata. Let the game report when a frame or asset was altered by the generative model. That helps modders, speedrunners, and reviewers know what they’re seeing.

- Train or tune models with developer-controlled datasets. If possible, allow studios to influence the model’s output — bias it toward preserving tone and intent rather than aggressively ‘‘improving’’ everything.

- Test UI and readability. UI elements often aren’t meant to be aesthetically enhanced; they need to remain legible. Lock UI layers out of generative processing if necessary.

Implementing those steps costs development time, but they protect your brand and reduce community friction.

Business and platform-level consequences

For platforms and publishers, DLSS-style frame generation is a lever to lower hardware requirements and reach more customers. If a console or cloud stream can deliver smoother visuals with fewer GPU cycles, that’s a clear commercial benefit.

But there's a tradeoff:

- Brand trust: players may defect if they feel an AI is altering beloved franchises in ways that betray the original creators.

- Legal and IP gray areas: generative enhancements trained on third-party data could raise questions about licensing and model provenance down the road.

- Competitive arms race: studios that control “faithful” pipelines will market authenticity as a differentiator; others will boast higher frame rates or perceived fidelity.

For multiplayer titles, inconsistent visual presentation becomes a usability and fairness issue — especially if visual cues linked to gameplay are altered unpredictably.

How to talk to your community about generative frame tech

Transparency is essential. Communicate what the feature does, show before/after examples, and explain the fail-safes you’ve built. Give players options and listen to early feedback. A measured rollout (beta opt-in, “experimental” label) reduces surprise and gives dev teams time to correct edge cases.

Three implications that matter long term

- Content authenticity will matter more. As generative enhancements become common, games (and other visual media) will need provenance and authenticity markers so creators and consumers know whether content is original or AI-augmented.

- New QA disciplines will emerge. Visual-perception testing, A/B community testing, and model auditing will become routine parts of release checklists alongside performance and localization.

- Creative tooling will bifurcate. Some developers will embrace generative models as creative assistants—automating texture polish or creating LODs—while others will insist on deterministic pipelines to preserve artistic control.

Generative frame generation is technically impressive and promises real economic benefits for developers and hardware vendors. But the community reaction to aggressive ‘‘glow-ups’’ is a timely reminder: graphics are not just pixels, they carry authorship and meaning. If studios and platform providers want to use these tools without alienating players, they’ll need clear controls, rigorous QA, and honest communication.

How a studio balances those priorities will determine whether generative frame tech becomes a welcome upgrade or a source of ongoing controversy for gamers and creators alike.