How NVIDIA’s DLSS 4.5 Multi‑Frame Generation Boosts FPS and Changes Workflows

Why DLSS 4.5 matters now

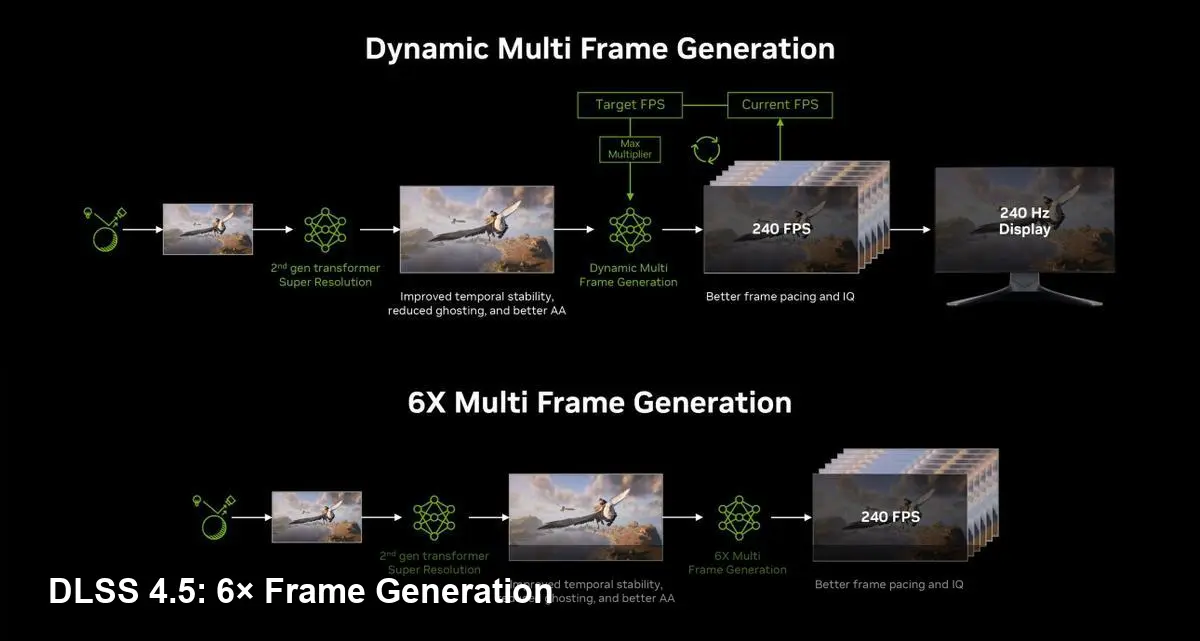

NVIDIA has rolled out DLSS 4.5 through an NVIDIA app beta update, bringing Dynamic Multi‑Frame Generation (MFG) and a new 6× mode into the hands of users. These additions expand the company’s frame‑generation toolkit: instead of only generating one extra frame between rendered frames, GPUs can now produce multiple synthetic frames to multiply perceived framerate.

For players and developers this is not just a raw performance number — it’s a change in how GPUs, engines and input systems balance throughput, latency and visual fidelity.

Quick primer: how frame generation works

At a high level, frame generation algorithms take rendered frames, motion vectors and temporal information and use neural networks (and dedicated hardware accelerators on recent NVIDIA GPUs) to synthesize intermediate frames. Historically, DLSS combined spatial upscaling and temporal reconstruction to raise resolution and smooth motion; frame generation layers on top of that to increase the number of frames shown to the display without proportionally increasing GPU renderer workload.

DLSS 4.5’s Dynamic MFG expands this idea by allowing the system to output multiple generated frames for each real GPU render. In 6× mode, up to five synthetic frames can be produced in addition to the original, potentially turning a 60‑FPS render rate into a 360‑FPS output stream.

What this changes for different users

Gamers — when higher FPS actually helps

Competitive players care about raw framerate and responsiveness. Higher output framerates reduce motion blur on displays with high refresh rates and can improve perceived smoothness. But synthetic frames are approximations: they can closely match real frames in steady motion but may show artifacts during rapid, chaotic changes.

Practical advice:

- Use Multi‑Frame Generation in games where motion is mostly regular (racing sims, many FPS arenas). Avoid in titles with unpredictable, high‑frequency motion if visual consistency is critical.

- Pair frame generation with NVIDIA Reflex or similar latency mitigation features. Generated frames can increase perceived smoothness but may affect input latency if not managed.

Example: if your GPU renders at 60 FPS and MFG is producing five extra frames, a 360‑Hz monitor will be fed frames more frequently and motion will look smoother even though the renderer didn’t do extra geometry or ray tracing work.

Developers and engine teams — integration and QC

Because Dynamic MFG is exposed through the NVIDIA app and engine plugins, game studios should plan validation and quality control rather than assuming “it just works.” The technique relies on motion vectors, depth buffers and consistent temporal data. Games that already ship high‑quality motion vectors and consistent temporal buffers will see the best results.

Developer checklist:

- Audit motion‑vector precision and depth buffer completeness.

- Implement automated artifact detection for things like ghosting and incorrect occlusions introduced by generated frames.

- Test input latency across typical hardware combinations and make Reflex or equivalent a recommended pairing for competitive modes.

Streamers and creators

Higher nominal framerates make gameplay visually smoother for recording and capture, but encoder bitrates and streaming platforms ultimately limit visual quality. Streamers should test generated‑frame workflows to confirm encoders and capture tools don’t introduce stutter when synthetic frames are used.

Trade‑offs and limitations

- Artifacts: synthesized frames can produce ghosting, incorrect occlusions, or smeared motion in edge cases. These are more visible when the generator is asked to fill many frames between large camera jumps.

- Latency nuances: frame generation can reduce perceived motion judder but may complicate end‑to‑end input latency budgets. Features like NVIDIA Reflex are relevant to minimize additional lag.

- Hardware scope: the most advanced frame‑generation capabilities have been tied to recent NVIDIA architectures that include the specialized accelerators used by DLSS. Expect the best results on RTX 40‑series (Ada) hardware and up.

- Not a substitute for GPU compute: generating extra frames can’t magically create more scene detail; it multiplies presentation frames but does not change geometry, lighting complexity, or AI NPC computation.

Real‑world scenarios

- Competitive shooter on a 360‑Hz monitor: An otherwise GPU‑limited player can use MFG 6× mode to feed the monitor at high frequency, smoothing motion when aiming and tracking. However, competitive players should verify latency with Reflex enabled.

- Open‑world single‑player title: For cinematic camera pans and smooth cutscenes, generated frames can produce a filmic smoothness without taxing ray tracing performance. Conversely, for sudden physics‑driven camera shakes, you may see artifacts.

- Cloud gaming: Providers can use multi‑frame generation to reduce server render costs while preserving a high‑refresh experience for end users — but network latency and encoding constraints remain the bottleneck.

Business and industry implications

- Changing upgrade calculus: When frame generation can multiply perceived FPS, gamers may delay GPU upgrades while still enjoying smoother motion — particularly at high refresh rates. That could flatten upgrade cycles for a segment of the market.

- Engine standards pressure: Middleware and engine toolchains will be incentivized to produce reliable motion vectors and standardized temporal outputs, making it easier for frame generation to work across many titles.

- Competitive responses: Expect AMD, Intel and middleware vendors to accelerate competing techniques (temporal upscalers, proprietary frame generators) and for cloud providers to experiment with server‑side generation to reduce rendering costs.

Three implications to watch next

- Quality vs. quantity balancing: Game settings and drivers may start providing more granular controls that trade generated‑frame count for artifact suppression or reduced latency.

- Wider adoption in non‑gaming fields: Virtual production, remote simulation and XR (if latency/comfort allow) could use multi‑frame generation to stretch hardware budgets while maintaining smoother visuals.

- New metrics: Industry benchmarking will need to evolve beyond raw rendered FPS; tests that measure perceived smoothness, artifact rates and end‑to‑end latency will become more important.

DLSS 4.5’s multi‑frame generation is a big step in the evolution of temporal neural tricks for graphics. It doesn’t replace raw rendering horsepower, but it offers a practical lever to multiply perceived framerate while reducing GPU load — with predictable trade‑offs that both gamers and studios will need to manage through testing and smart defaults.