Why under‑display Face ID is slowing Apple's full‑screen iPhone

A new design frontier—and why it’s harder than it looks

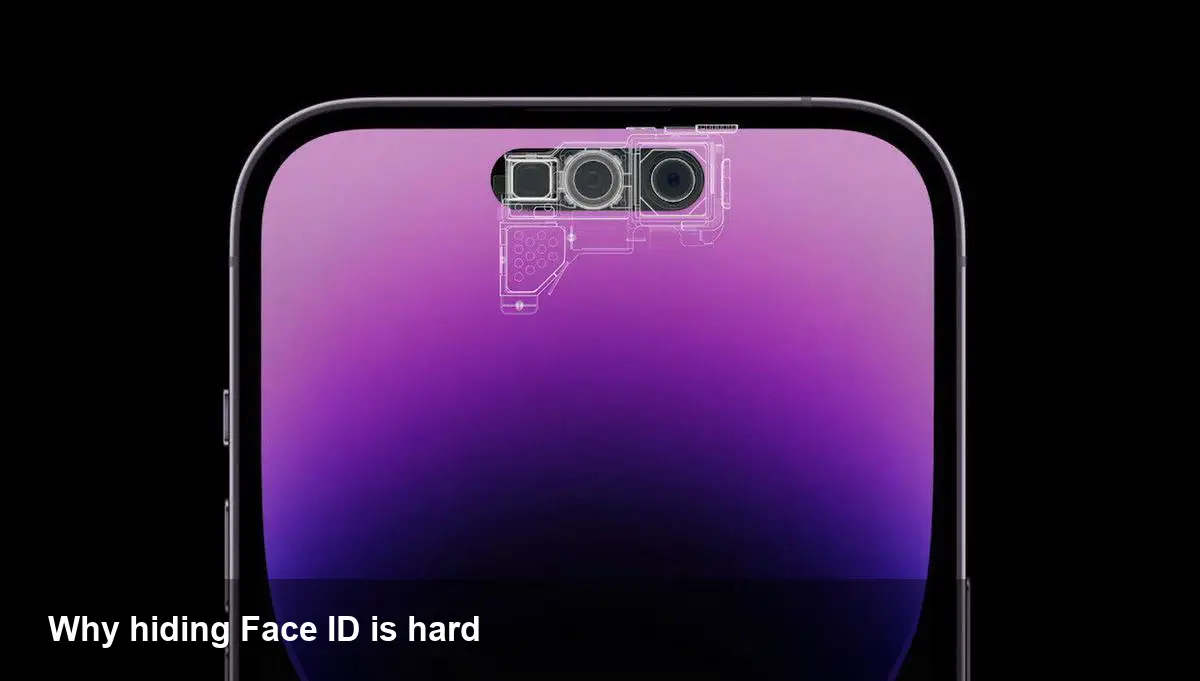

Apple has made shrinking the visible hardware on the iPhone screen a long-term design target. The goal sounds simple: an uninterrupted, edge-to-edge OLED display with no notch or visible cutouts. Getting there, though, depends on hiding complex sensors for facial authentication and front-facing cameras beneath the glass. That’s where under-display Face ID comes in—and why progress has been slower than many expected.

Brief background: Face ID, Dynamic Island and the design context

Since 2017, Apple has used Face ID as the primary biometric for unlocking iPhones and authenticating payments. The system relies on multiple components (infrared cameras, a dot projector, and flood illumination) to build a secure 3D map of a face. Most modern iPhones also feature the Dynamic Island, a software-driven UI built around the front-facing sensor cluster that integrates notifications, live activities, and system indicators into a small visible area at the top of the screen.

Designers and engineers want both: keep Face ID's security and keep the user-facing functionality of the Dynamic Island—while removing visible hardware. Under-display Face ID promises that. But implementing it without sacrificing reliability, battery life or display quality is a significant engineering challenge.

The technical hurdles in plain terms

Putting a camera or an infrared sensor under OLED glass is a lot harder than hiding a single selfie camera. Key problems include:

- Optical transmission: The pixels and thin-film layers above a sensor reduce light and infrared throughput. That weakens image quality and infrared reflections that the Face ID dot projector relies on.

- Dot projector operation: Face ID's structured light system projects thousands of tiny infrared dots. Those dots must be accurately read back by a sensor. Any distortion or loss from the cover glass or display stack interferes with the 3D map.

- Thermal and power constraints: Activating higher-power illumination or stronger sensors to compensate drains battery and can create heat management issues in a slim chassis.

- Manufacturing yield: Adding a layer where only some display pixels can be transparent or tuned increases production complexity. Lower yields mean higher costs and slower ramp-up.

Because Face ID is core to device security and day-to-day convenience, Apple can’t simply ship a less-reliable implementation. Users expect instant authentication under a variety of lighting conditions and consistent performance across millions of devices.

A phased design approach: smaller visible element, not instant disappearance

Instead of a sudden jump to an entirely invisible array, Apple is taking incremental steps. One practical path is progressively reducing the size of the Dynamic Island or the visible sensor housing while moving more functions under the display. That minimizes disruption to the user experience and gives partners time to adjust manufacturing processes.

For users this means small, iterative visual changes over multiple model cycles rather than one dramatic redesign. For accessory makers and carriers it means continued support for near-term cases, screen protectors, and repair workflows with gradual updates.

Concrete examples: what this changes for users and developers

- App UI and layout: Developers must continue respecting safe area insets and the Dynamic Island layout APIs. Even if the island shrinks, Apple will maintain the same system indicators to avoid breaking app content. Expect subtle changes to recommended padding and animation timings as the visible area changes across hardware generations.

- Face ID reliability expectations: Imagine taking a selfie in dim lighting. If under‑display sensors passively lose accuracy, users will notice more unlock failures or slower authentication. That would push Apple to either fall back to alternate modalities (PIN/Passport) more often or to adjust when the display disables pixels to optimize sensor performance.

- Hardware accessories: Case designers create cutouts and mounting tolerances around camera clusters. As those clusters shrink, cases can be sleeker, but they’ll need new tooling. Screen protector makers must ensure new materials don't interfere with under-display sensors.

- Enterprise and security: IT teams provisioning thousands of devices will watch authentication metrics. A change in false reject rates (FRR) or false accept rates (FAR) affects usability and risk postures. Apple will likely provide diagnostic data and MDM flags during transition periods.

Business and supply-chain implications

Moving sensitive sensor arrays under the display increases supplier complexity. Display fabs, sensor manufacturers, and optical materials suppliers must coordinate tolerances and coatings at scale. That coordination raises capital and ramp timing risks for launch windows: lower yields could mean limited initial supply, higher prices, or staged geographic rollouts.

Retail repair ecosystems also face tradeoffs. If several sensors are embedded under the OLED stack, replacing a cracked display may require additional recalibration steps or paired component replacements—pushing repair costs up and complicating third-party repairs.

Three future implications to watch

- Biometric diversification: If integrating all Face ID components under glass remains difficult, Apple could combine partial under-display sensors with other biometrics (e.g., under-display Touch ID) or rely on improved software-level fusion to maintain security and speed.

- App and OS continuity: Apple is likely to smooth platform transitions with backward- and forward-compatible UI APIs. Developers who test dynamic safe areas and use system-friendly layouts will have fewer issues as hardware changes.

- Competitive pressure and timelines: Rivals adopting under-display cameras and fingerprints may accelerate the market narrative, but Apple historically prioritizes reliability over being first. Expect incremental visual changes to the iPhone top area in the coming model years rather than an abrupt disappearance.

What this means for you right now

If you’re a developer: keep validating UI around the Dynamic Island and follow Apple’s Human Interface Guidelines for safe areas. Test authentication flows in low light and with network-constrained conditions to catch secondary edge cases.

If you run a hardware or accessory business: plan tooling updates but avoid discarding existing inventory immediately. Modular tooling and flexible production runs can ease the transition when the visible sensor area finally shrinks.

If you’re buying: don’t expect a perfect full-screen iPhone overnight. If seamless facial unlock and predictable app behavior matter to you, one or two more hardware cycles of refinement may be worth waiting for.

Apple’s ambition to hide Face ID under the screen promises a cleaner look and fewer visible interruptions. The company’s careful, iterative approach reflects the real technical complexity and the user expectations it must sustain. The next few iPhone generations will likely show steady progress—smaller sensor footprints, smarter software handling, and tighter integration between displays and biometrics—rather than a single dramatic “all-screen” moment.