Immersive Navigation: How Google Maps Reimagines Driving

What’s changed and why it matters

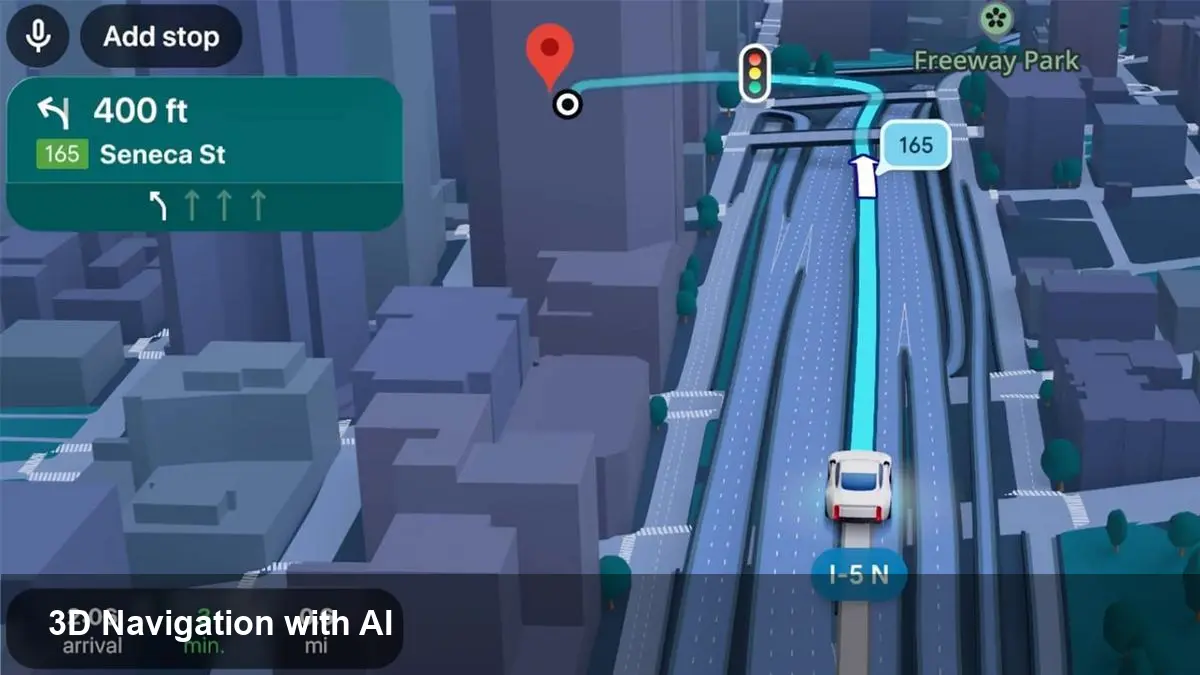

Google is rolling out an “Immersive Navigation” experience that layers photoreal 3D mapping, augmented reality cues and on‑device AI into driving directions. The company frames the move as the biggest refresh to how Maps guides drivers in more than a decade. For everyday drivers, fleet operators and developers, that combination shifts navigation from flat turn lists to a visual, context‑aware guide that aims to reduce missed turns, lessen cognitive load and speed up last‑mile tasks.

A quick background on Google Maps and the new tech

Google Maps has evolved from simple map tiles and routing algorithms into a data‑rich platform: satellite imagery, Street View, local business data, Live View AR and real‑time traffic. Immersive Navigation stitches several of these capabilities together. It uses high‑fidelity 3D reconstructions of roads and landmarks, overlays contextual graphics for lane guidance and merges Live View’s camera‑based orientation to help drivers visually identify complex junctions.

Under the hood there are three enabling pieces:

- Photoreal 3D map models built from Street View and aerial imagery.

- AI models that infer what’s important to the driver (lane markings, exits, signage) and generate simplified visual directions.

- Fusion with Live View AR to anchor instructions to real world imagery when needed.

How this changes real driving scenarios

Here are concrete use cases where Immersive Navigation can make a measurable difference.

- Complex urban interchanges: In dense cities with stacked ramps and short decision windows, the 3D view highlights the correct lane and shows the exit geometry from the driver’s perspective. Rather than a text instruction ("Take Exit 14B"), drivers see a virtual arrow over the exact ramp.

- Unfamiliar neighborhoods: When a driver pulls into a new area, Live View can briefly surface camera‑anchored cues — for example, pointing out a turn concealed by parked trucks or temporary signage — making it easier to find the right street.

- Delivery and rideshare: For drivers who stop frequently, the system can emphasize driveway entrances, loading zones, and building facades to reduce time spent circling blocks. That can shave minutes off each stop and add up across a shift.

- Night and low‑visibility driving: 3D representations of nearby landmarks (big buildings, stadiums) help with orientation when road signs are obscured or difficult to read.

What developers and product teams should plan for

Immersive Navigation isn’t just a consumer polish — it opens new product and integration opportunities.

- APIs and SDK surface areas: Expect demand for access to 3D tiles, enriched lane‑level metadata and event hooks (when a lane change is recommended, when AR guidance engages). Product teams building navigation SDKs or in‑car software will want low‑latency access.

- Data and testing needs: High‑fidelity visual directions rely on up‑to‑date imagery and accurate map vector data. Teams building routing or logistics products should plan for more frequent map refreshes and robust testing in edge cases where the AI might mislabel a feature.

- UX coordination for distracted driving: Immersive visuals can be powerful but also potentially distracting. Developers integrating the feature into apps or car displays will need to follow platform guidelines for safe UI, prioritize glanceability, and possibly limit AR engagement when vehicle speed or driver attention metrics indicate risk.

- Cost and bandwidth considerations: Rich 3D tiles and Live View overlays increase data use and compute. Products aimed at commercial fleets should evaluate offline caching, edge processing and pricing for heavy map usage.

Business implications and opportunities

This evolution in navigation touches several business areas:

- Local commerce and discovery: Enhanced visual cues and 3D building facades make storefronts more prominent. Businesses with physical locations could see higher footfall if their facades are accurately modeled.

- Fleet optimization: Faster and more reliable first‑time arrivals reduce idle time. For delivery companies, even small reductions in per‑stop minutes scale into substantial cost savings.

- Automotive partnerships: Car makers and infotainment vendors will evaluate deeper integrations — especially as richer visuals become expected. That creates opportunities for OEM licensing or premium navigation bundles.

- Advertising and privacy tradeoffs: Visual prominence could increase advertising value (highlighted destinations), but will require careful privacy and user‑consent handling given the level of contextual data involved.

Potential downsides and constraints

No technology is without limitations. Consider these practical constraints:

- Coverage: New 3D/AR features usually roll out first in dense urban centers; rural and less‑mapped areas may see delayed availability.

- Data and battery drain: Continuous camera usage for Live View and streaming high‑resolution map models is heavier than traditional navigation.

- Visual clutter and trust: Overlaid graphics must be minimal and reliable. If AI occasionally points to the wrong entrance or lane, drivers can lose trust in the system quickly.

- Safety and regulation: Regulators may scrutinize in‑car AR for distracted driving. Automakers and map providers will need to collaborate on safe interaction models.

Practical steps for teams and drivers

For developers and product managers:

- Prototype with limited AR triggers (only in complex intersections) and measure driver behavior before expanding.

- Build offline fallbacks and offer a low‑bandwidth mode for fleet customers.

- Include explainability logging: when the AI suggests a lane change, log the map evidence so you can audit errors.

For drivers and fleet operators:

- Use the feature selectively at first (try it in familiar areas) and monitor battery/data impact.

- For commercial fleets, test route variations in a controlled pilot and measure stop time improvements.

Three forward‑looking implications

1) Navigation will increasingly be visual-first rather than text-first, shifting UX design toward glanceable, perspective‑aware cues. 2) Real‑time 3D mapping will become a competitive moat for companies that can update imagery and semantic labels rapidly — critical for logistics and autonomy. 3) We’ll see tighter convergence between map platforms and car OEMs, with navigation experiences bundled as a differentiator in vehicle ecosystems.

Google’s Immersive Navigation marks a practical step toward navigation that looks and feels like the real world, not just an abstract map. Expect a period of iterative improvement — smarter lane suggestions, wider coverage, and tighter safety controls — as the feature moves from early rollouts into daily driving for millions of users.